Executive Summary

Poor API naming is not a style problem — it is a maintenance problem. Inconsistent naming forces developers to read documentation for every endpoint, slows integration timelines, creates ambiguity in multi-team codebases, and generates support tickets that should never exist. This article covers how my team establishes naming systems that stay coherent as APIs grow from a dozen endpoints to several hundred.

The Real Problem This Solves

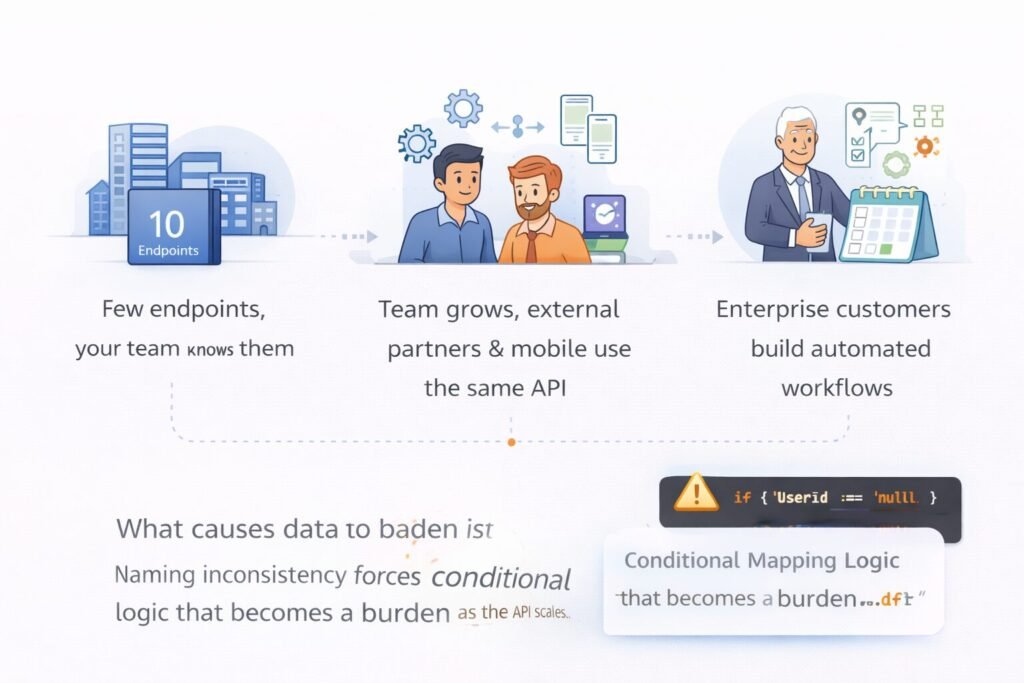

API naming feels like a low-stakes decision early in a product’s life. You have ten endpoints, your team knows what they all do, and the inconsistencies are manageable. Then the API grows. New engineers join. External partners integrate. Mobile teams consume the same endpoints as web clients. Enterprise customers build automated workflows on top of your platform.

At that point, every naming inconsistency becomes a tax. A field called user_id in one response and userId in another forces every client to write conditional mapping logic. An endpoint that uses DELETE /users/{id} for soft deletes but POST /users/{id}/archive for a logically identical operation in a different resource creates mental overhead that compounds across hundreds of integrations.

My team has audited APIs at companies that have been shipping for five or more years. The pattern is consistent: naming decisions made casually in year one become the most complained-about aspect of the API in year three. Retrofitting naming conventions onto a live API with external consumers is one of the most expensive technical operations a platform team can undertake. The cost of getting it right upfront is a few hours of design discussion. The cost of getting it wrong is months of migration work and broken client trust. If you are building a scalable REST API for SaaS applications, naming conventions belong in your design standards before the first endpoint ships — not after the first inconsistency surfaces in production.

The Foundation: Four Naming Principles That Do Not Bend

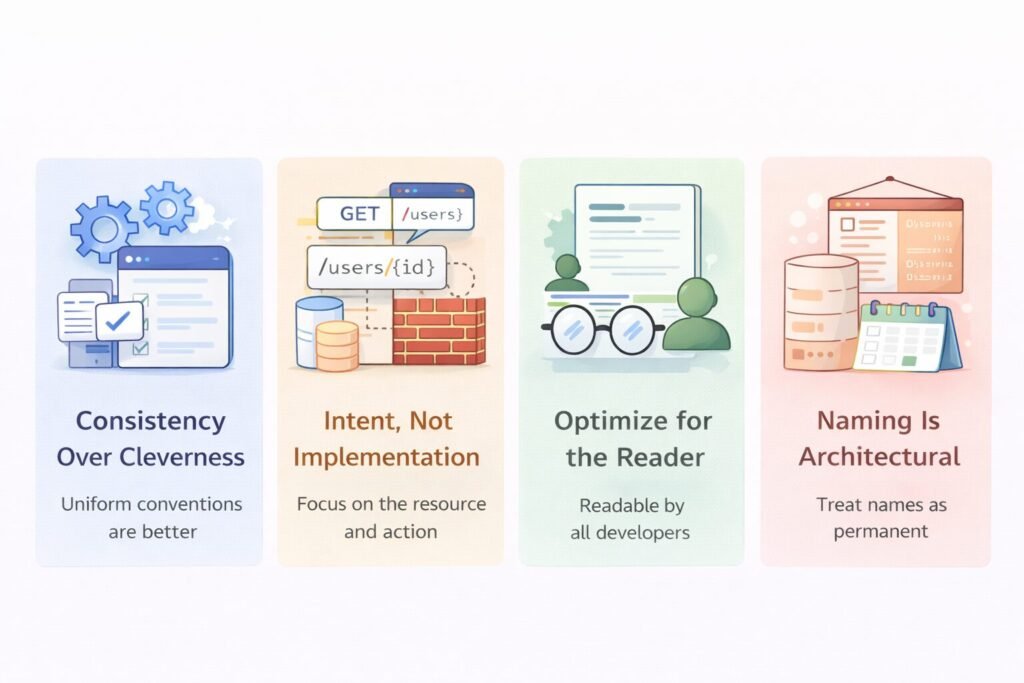

Before getting into specifics, my team operates from four principles that govern every naming decision. These are non-negotiable regardless of the protocol, the resource, or the team shipping the endpoint.

Consistency over cleverness. A naming convention that is slightly suboptimal but applied uniformly is vastly better than a theoretically perfect convention that gets interpreted differently by different engineers. The value of naming comes from predictability. Predictability requires uniformity.

Names communicate intent, not implementation. An endpoint named /getUserFromDatabase is leaking implementation details that will become wrong the moment the storage layer changes. /users/{id} communicates the resource and the operation. It stays accurate regardless of what happens behind the route handler.

Optimize for the reader, not the writer. API names get written once and read thousands of times. The engineer who names an endpoint spends thirty seconds choosing a name. Every developer who integrates with that endpoint spends time deciphering it forever. Optimize for the reader.

Treat naming decisions as architectural decisions. A field name in a public API response is as permanent as a database schema column. Both require a migration to change. Both have downstream consumers. Both should go through the same level of review. This is a core principle behind the complete guide to API design for production systems — naming is architecture, not formatting.

Resource Naming: Nouns, Plurals, and Hierarchy

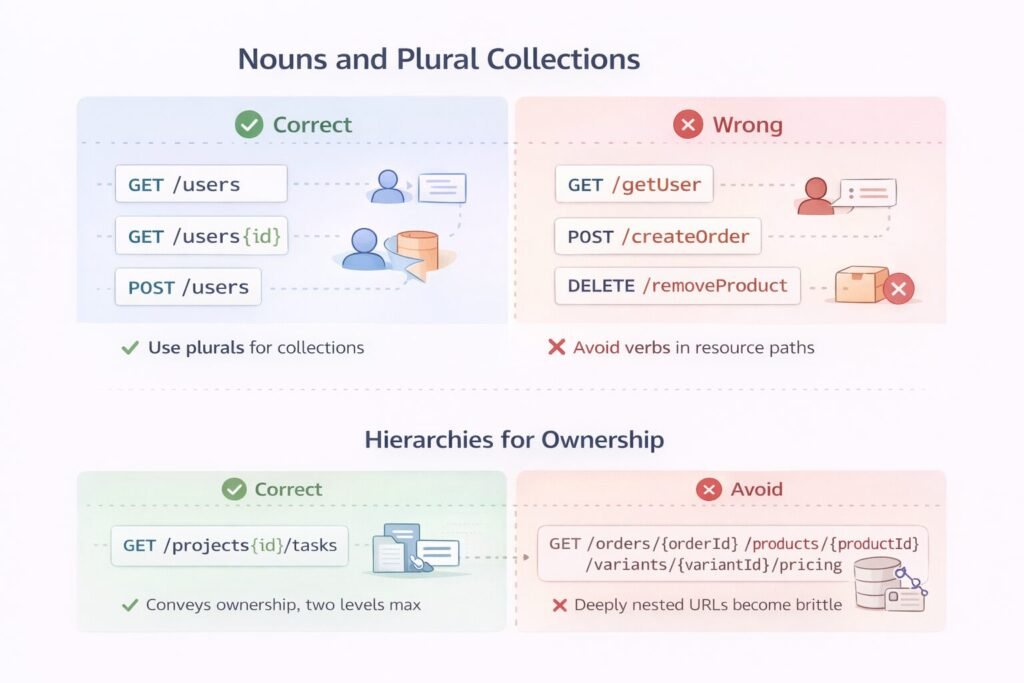

Always Use Nouns for Resources

REST resources are nouns. This is the rule that generates the most arguments in API design reviews and the one my team enforces most strictly.

# Wrong

GET /getUser

POST /createOrder

DELETE /removeProduct

# Correct

GET /users/{id}

POST /orders

DELETE /products/{id}The HTTP method carries the verb. The URL carries the resource identity. When you put a verb in the URL, you are doubling up on intent in a way that breaks the uniform interface principle and creates inconsistency the moment different engineers name similar operations differently. One engineer writes /createOrder, another writes /addOrder, a third writes /newOrder — and you have three names for the same concept.

Plural Nouns for Collections

Collections are plural. Individual resources within a collection are accessed by identifier. This is not a stylistic preference — it is a structural contract.

GET /users → returns collection

GET /users/{id} → returns single resource

POST /users → creates resource in collection

DELETE /users/{id} → removes specific resourceThe consistency here is what makes APIs learnable. A developer who knows how /users works can correctly guess how /orders, /products, and /invoices work without reading documentation. That transferability is the return on investment from consistent naming. This same predictability is what makes GraphQL vs REST for SaaS such an important architectural decision — REST’s learnability depends entirely on naming discipline.

Hierarchy That Reflects Ownership, Not Database Schema

Nested resources in URLs should represent ownership or containment relationships, not join table logic.

# Correct — an order owns its line items

GET /orders/{orderId}/items

# Avoid — this is a database join, not a resource hierarchy

GET /orders/{orderId}/products/{productId}/variants/{variantId}/pricingMy team sets a maximum nesting depth of two levels. Beyond two levels, the URL becomes brittle — every level of nesting creates a coupling between the URL structure and the data model. When the data model changes, deeply nested URLs break.

For relationships that do not map cleanly to ownership hierarchies, use query parameters or a dedicated relationship resource rather than deep nesting.

# Instead of deep nesting

GET /users/{userId}/organizations/{orgId}/teams/{teamId}/members

# Use a flatter approach with filtering

GET /team-members?userId={userId}&teamId={teamId}Casing Conventions and Why Mixing Them Is Dangerous

The Three Contexts With Different Rules

Casing decisions appear in three distinct contexts in an API, and each context has a different correct answer.

URL paths use kebab-case. Words are lowercase and hyphen-separated. This is not arbitrary — hyphens are URL-safe, underscore-containing URLs have historically had SEO and logging parsing issues, and lowercase URLs avoid case-sensitivity problems across different server environments.

/user-profiles

/payment-methods

/api-keysJSON body fields use camelCase in JavaScript ecosystems and snake_case in Python ecosystems. My team picks one and enforces it globally. The specific choice matters less than the consistency. What is not acceptable is mixing: user_id in one response and userId in another.

HTTP headers use Train-Case — capitalized words separated by hyphens:

Content-Type

Authorization

Idempotency-Key

X-Request-IdThe Mixed Casing Failure Mode

The most common naming failure my team encounters in API audits is mixed casing within a single API surface. It typically happens because different teams or different engineers worked on different parts of the API without a shared standard. The result looks like this:

{

"userId": 123,

"user_name": "alice",

"EmailAddress": "alice@example.com",

"phone-number": "555-0100"

}

```

Every client that consumes this response needs to handle four different casing conventions for four fields in the same object. This is not a minor inconvenience — it is a correctness hazard. Automated serialization libraries handle casing transformation, but only when the casing is consistent enough to configure a single rule. Mixed casing defeats every automated normalization strategy.

---

## Query Parameter Naming

Query parameters filter, sort, paginate, and scope collection responses. My team uses snake_case for query parameters consistently with JSON body field conventions.

### Filtering

Use the field name directly as the filter key. Do not add redundant prefixes.

```

# Clean

GET /orders?status=pending&customer_id=123

# Redundant

GET /orders?filter_status=pending&filter_customer_id=123

```

For range filters, my team uses `_min` and `_max` suffixes:

```

GET /orders?created_at_min=2024-01-01&created_at_max=2024-03-31

GET /products?price_min=10&price_max=100

```

### Pagination

Pagination parameters need to be consistent across every collection endpoint. My team standardizes on:

```

GET /users?page=2&per_page=50

```

or cursor-based:

```

GET /users?cursor=eyJpZCI6MTIzfQ&limit=50

```

Pick one pagination model and apply it everywhere. The most disorienting API experience for a developer is discovering that `/users` uses offset pagination but `/orders` uses cursor pagination with different parameter names.

### Sorting

```

GET /orders?sort_by=created_at&sort_order=descNot orderBy, not sortField, not order — pick one naming pattern and apply it globally.

Field Naming Inside Response Bodies

Timestamps

Every timestamp field in every response should be ISO 8601 format in UTC. The field names should be explicit about what they represent:

{

"created_at": "2024-01-15T10:30:00Z",

"updated_at": "2024-01-16T08:15:00Z",

"deleted_at": null,

"expires_at": "2024-07-15T00:00:00Z"

}Never use ambiguous names like date, time, timestamp, or modified. These tell the client nothing about what event the timestamp represents. Every timestamp field should answer the question: “when did what happen?”

Identifiers

Identifiers should always be named with the resource type as a prefix:

{

"user_id": "usr_123",

"order_id": "ord_456",

"product_id": "prd_789"

}The resource-prefixed id convention eliminates ambiguity when objects are nested. A response that contains both user_id and order_id is immediately parseable. A response that contains two fields both named id in nested objects is a serialization trap.

On prefixed identifier values: my team uses resource-type prefixes in the identifier value itself — usr_, ord_, prd_. This makes identifiers self-describing in logs, support tickets, and database queries. When a client pastes an ID into a support request, you know immediately which resource it refers to without looking it up.

Boolean Fields

Boolean field names should read as true/false statements, not questions or ambiguous states:

{

"is_active": true,

"is_verified": false,

"has_subscription": true,

"requires_action": false

}Avoid names like active, verified, subscription for boolean fields — these are ambiguous. Is active: false the same as active: null? Does subscription: true mean the user has one, or that the feature is enabled? The is_ and has_ prefixes eliminate ambiguity at the cost of two or three characters.

Monetary Values

Never store monetary values as floats. Never. Floating point arithmetic produces rounding errors that accumulate across calculations and produce incorrect totals in financial contexts.

My team represents monetary values as integers in the smallest currency unit — cents for USD, pence for GBP — alongside an explicit currency field:

{

"amount": 4999,

"currency": "usd"

}

```

`4999` is unambiguous. `49.99` as a float is a lawsuit waiting to happen at sufficient transaction volume. This convention becomes especially critical when you [integrate Stripe usage-based billing with Next.js](https://finlyinsights.com/integrate-stripe-usage-based-billing-with-next-js/) or [implement scalable pricing tiers for API-based SaaS](https://finlyinsights.com/scalable-pricing-tiers-for-api-based-saas/) — a naming inconsistency in monetary fields at billing layer is not a developer experience problem, it is a financial accuracy problem.

---

## Action Endpoints: When Nouns Are Not Enough

Pure REST naming breaks down for operations that do not map cleanly to CRUD. Canceling an order, resending a verification email, archiving a workspace, triggering a sync — these are actions, not resource creations.

My team handles these with a consistent action sub-resource pattern:

```

POST /orders/{id}/cancel

POST /users/{id}/verify

POST /workspaces/{id}/archive

POST /integrations/{id}/syncThe action is a verb, but it lives under the resource it acts on and uses POST consistently. This is distinguishable from resource creation because the action name is not a noun.

What my team avoids is mixing action patterns — some actions as POST to a sub-resource, others as PATCH to the parent resource with a status field, others as DELETE. Pick one pattern for actions and apply it uniformly. This consistency also matters at the API gateway level — if you are wondering whether API gateways handle rate limiting for action endpoints specifically, the answer depends heavily on how predictably those endpoints are named and routed.

Error Response Naming

Error responses are part of the API contract and need the same naming discipline as success responses. My team standardizes on:

{

"error": {

"code": "validation_failed",

"message": "The request body contains invalid fields.",

"details": [

{

"field": "email",

"code": "invalid_format",

"message": "Must be a valid email address."

}

]

}

}Key decisions here:

code fields use snake_case strings, not integers. An error code of 1042 tells a developer nothing without a lookup table. invalid_format is self-documenting.

message fields are human-readable, not machine-parsed. If your client is switching on the message string to determine error handling logic, that is a bug in your API design. The code field carries machine-readable semantics. The message field carries human-readable context.

Field-level errors are nested under details, not flattened. A flat error structure that can only report one error at a time forces validation round-trips. Return all field errors in a single response.

The naming of error codes connects directly to how clients handle failures. If you are thinking through when an API should return 400, whether an API should always return 200, or whether an API should return 500, the answer in each case depends on your error taxonomy — and that taxonomy starts with how you name error codes. Developers who hit a wall with a vague error can reference your guide on how to fix an API error or how to fix API error 400 only if the error names are descriptive enough to be searchable in the first place.

Security Implications of Naming

Naming decisions expose information about your system architecture in ways that create security surface area.

Implementation-leaking names tell attackers about your stack. An endpoint at /api/v1/users.php reveals the server technology. A field named mysql_row_id reveals the database. A header called X-Django-Version reveals the framework. Strip implementation details from all externally visible names.

Predictable identifier naming enables enumeration attacks. If your user identifiers are sequential integers — user_id: 1, user_id: 2 — an attacker can iterate through the entire user base. My team uses prefixed UUIDs or similar non-sequential identifiers for all externally exposed resource IDs. This connects directly to common security vulnerabilities in API authentication — predictable identifiers are one of the most exploited attack vectors in API security audits.

Sensitive field names advertise sensitive data even when the values are redacted. A field named internal_credit_score in an API response — even if the value is null for most clients — signals that the field exists and may be accessible. My team omits sensitive fields entirely from responses where the client lacks permission, rather than returning them with null values. This pairs directly with role-based access after payment patterns — field visibility should be gated by role at the serialization layer, not just by business logic.

For teams building authentication into their naming systems, understanding what a JWT is in an API, the safest way to store JWT tokens, and whether cookies are safer than localStorage all feed into how you name and structure authentication-related fields in your API responses.

Performance Bottlenecks

Verbosity Tax on High-Frequency Endpoints

Descriptive naming is the right default, but verbosity has a cost at scale. A response field named customer_billing_address_street_line_one is unambiguous but adds bytes to every response. At low volume, this is irrelevant. At hundreds of millions of API calls per day, response payload size is a meaningful infrastructure cost.

My team balances descriptiveness with practicality by applying strict naming conventions to public-facing fields and allowing abbreviated internal field names only in high-frequency, well-documented internal APIs where payload size is a known constraint.

Routing Performance and Named Path Parameters

Deeply nested URLs with multiple named path parameters add router matching overhead. Most modern routers handle this efficiently, but at extreme scale — millions of requests per second — route complexity matters. Flat, well-named resource hierarchies are faster to route than deeply nested ones. This is a secondary concern compared to correctness, but it is a real one in high-traffic systems where API timeouts and retries in distributed systems are already a concern.

Common Mistakes My Team Has Seen

- Inconsistent plurality.

/userfor a collection endpoint instead of/users. Then someone adds/ordersplural and you have mixed conventions in the same API. - Verbs in resource URLs.

/getUsers,/fetchOrder,/deleteProduct. The HTTP method is the verb. The URL is the noun. - Ambiguous field names.

date,status,typewith no qualifying context. These names force developers to read documentation for every field. - Exposing database column names directly. Auto-generating API responses from ORM models produces field names that reflect schema implementation, not domain semantics.

- Inconsistent identifier field names. Sometimes

id, sometimesuserId, sometimesuser_id, sometimesuid— across different endpoints in the same API. - Different conventions across microservices. Each service ships its own naming style, and the API gateway exposes them all under the same domain. Clients experience the inconsistency as a single product failure.

- No naming standard document. Conventions that live only in the heads of the engineers who created them do not survive team growth. My team maintains a written API design standards document that is referenced in every API design review. Enforcing this alongside API versioning strategies means your naming conventions and your compatibility guarantees evolve together — not in separate silos.

When Not to Apply These Conventions

Strict naming conventions are worth the discipline for external APIs, partner integrations, and any surface that will outlive the team that built it. There are contexts where the overhead is not justified.

Throwaway internal tooling consumed by one service and one service only does not need convention enforcement. The cost of migration is near-zero and the consumer is co-located with the producer.

Event schemas in internal message queues follow different conventions than HTTP APIs. Kafka topic names, SQS message attributes, and event envelope structures have their own ecosystem conventions that may not align with REST naming standards. Do not force HTTP API naming onto event-driven systems. If you are building automated meeting workflows with AI agents or similar event-driven automation, your event schema naming lives in a different design space than your HTTP API naming.

Third-party API wrappers where you are proxying or adapting an external API should match the upstream naming where it reduces confusion, not force your internal conventions onto a shape that was never designed for them.

Prototype APIs built for rapid discovery should not be gold-plated with naming infrastructure before the shape of the API is known. Lock in conventions once the resource model has stabilized.

Enterprise Considerations

Enterprise clients integrate your API into systems that will run for years and are maintained by teams that rotate. The engineer who built the integration in year one is often not the engineer maintaining it in year three.

This makes naming stability a contractual concern. My team communicates field-level deprecations with the same formality as endpoint deprecations. A field rename is a breaking change. A semantic change to what a field value means is a breaking change. Enterprise SLAs should include naming stability commitments alongside uptime commitments.

SDK generation: Most enterprise-scale APIs ship SDKs. SDK method names, parameter names, and model property names derive from API naming. If your naming is inconsistent, your SDK is inconsistent, and the inconsistency is now in the client’s codebase — not just your documentation. Consistent API naming is a prerequisite for generating usable SDKs automatically. Teams using OpenAPI to build and document APIs get SDK generation as a downstream benefit — but only if the naming in the spec is consistent enough for generators to produce clean output.

Developer portal searchability: Enterprise developer portals index endpoint and field names. When a developer searches for “invoice status,” they expect to find relevant endpoints. If your API uses billing_state instead of invoice_status, search fails and support tickets get filed. Name things what your users will search for. Teams that automate invoice generation with PDFKit or similar workflows depend on field names being exactly what they expect — a mismatch between the documentation and the actual field name in a billing response is the kind of bug that surfaces in production at the worst possible moment.

Multi-factor authentication flows introduce naming decisions that affect security UX. My team has found that teams building multi-factor authentication with Auth0 and NextAuth often underestimate how much the naming of auth-related fields — mfa_required, auth_method, challenge_type — affects how cleanly downstream systems can handle authentication state.

Cost & Scalability Implications

Poor naming is a hidden operational cost that compounds with scale.

Support volume: Ambiguous field names and inconsistent conventions generate developer support tickets. At low integration volume, this is manageable. At thousands of integrations, a naming ambiguity that generates one support ticket per hundred integrations becomes a full-time support burden.

SDK maintenance: Every naming inconsistency that makes it into a public SDK becomes a migration when it is fixed. SDK migrations have adoption curves that stretch over years. My team has seen APIs carry deprecated SDK methods for four years because the naming was inconsistent in v1 and the fix required a breaking SDK change.

Documentation overhead: Consistent naming reduces documentation burden. If every collection endpoint follows the same pattern, you document the pattern once. If every pagination parameter is named identically, you document pagination once. Inconsistency requires documenting every exception individually, which multiplies documentation maintenance linearly with the number of inconsistencies.

Rate limiting surface area: Consistent endpoint naming makes implementing rate limiting for an API significantly cleaner. When endpoints follow predictable patterns, you can apply rate limiting rules by route pattern rather than enumerating individual endpoints. Inconsistent naming forces you to manage rate limit configurations per-endpoint, which does not scale. Understanding what a good API rate limit is for public APIs and how to avoid hitting API rate limits both become easier conversations when your endpoint naming is predictable enough for clients to reason about consumption patterns.

Onboarding time: New engineers joining a team with a well-named API reach productivity faster because the API is learnable from its structure. New engineers joining a team with inconsistent naming spend weeks building a mental map of exceptions. This is a real cost at scale — multiply the onboarding tax by every engineer who will ever work with the API. Teams using Notion to manage knowledge and workflows often try to compensate for poor API naming with extensive internal documentation — but documentation that explains naming inconsistencies is documentation that should not need to exist.

Implementing This Correctly: A Strategic Perspective

Naming conventions do not enforce themselves. My team treats the API design standards document as a living artifact with real governance — it is referenced in pull request templates, it is part of new engineer onboarding, and API design reviews explicitly check compliance before endpoints ship.

The implementation has three phases. First, establish the standard before you have an API to name. The worst time to create a naming convention is after you have shipped twenty inconsistent endpoints. The standard should exist before the first endpoint is designed.

Second, enforce it at the boundary. My team uses OpenAPI spec linting — tools like Spectral — to catch naming violations automatically in CI. A field that violates casing conventions fails the build the same way a failing test does. Automated enforcement is the only enforcement that scales. This connects to broader API rate limiting strategies for high-traffic applications — consistency at the naming layer makes every downstream enforcement mechanism more reliable.

Third, treat the naming standard as part of your API’s public contract. When external developers build on your API, they are betting that the names they write into their code today will still work years from now. Naming stability is a promise, not a preference. The same discipline that goes into designing idempotent APIs at scale should go into naming — both are correctness guarantees, not style preferences.

APIs that are well-named get integrated faster, generate fewer support requests, produce better SDKs, and earn the kind of developer trust that turns into platform adoption. Naming is not cosmetic. It is architecture. Treat it accordingly.

Finly Insights Team is a group of software developers, cloud engineers, and technical writers with real hands-on experience in the tech industry. We specialize in cloud computing, cybersecurity, SaaS tools, AI automation, and API development. Every article we publish is thoroughly researched, written, and reviewed by people who have actually worked in these fields.