Executive Summary

Backward compatibility is not a feature you add to an API — it is a discipline you maintain from the first commit. Every field removed, every type changed, every behavior modified without a compatibility layer is a broken integration somewhere in production. This article covers how my team designs APIs that absorb change without breaking clients, the engineering decisions that make compatibility sustainable at scale, and the organizational practices that prevent compatibility debt from accumulating silently.

The Real Problem This Solves

Breaking an API is easy. You rename a field because the original name was ambiguous. You change a string field to an enum because you want validation. You remove an endpoint that analytics says has zero traffic — but the analytics are not capturing the enterprise customer who calls it from a nightly batch job. Three weeks later, a customer’s integration is silent-failing, their data pipeline has been producing incorrect output, and they are filing a severity-one support ticket that escalates to your executive team.

The cost of a breaking change is asymmetric. The engineer who made the change spent thirty minutes on it. The integration team affected by it might spend three days diagnosing, fixing, and redeploying. Multiply that across ten integrations and you have a month of engineering time destroyed by a field rename that felt trivial at commit time.

My team has worked with SaaS companies that lost enterprise deals specifically because a prospect’s technical team discovered a history of undocumented breaking changes during due diligence. Enterprise buyers run API stability assessments before signing large contracts. A changelog full of “breaking change: renamed field X to Y” is a red flag that signals immature API governance, regardless of how good the product itself is.

Backward compatibility is not about never changing your API. It is about changing your API in ways that do not require existing clients to change simultaneously. These are fundamentally different problems, and conflating them is what leads teams to either freeze their API in amber or break it carelessly.

What Backward Compatibility Actually Means

A change is backward-compatible if a client built against the previous version of the API continues to work correctly against the new version without any modifications. This definition has two critical implications that most teams understate.

First, “continues to work correctly” means behavioral correctness, not just technical execution. An API change that does not break the client’s code but silently changes the semantics of a field — changing what a status value means, changing the unit of a numeric field from cents to dollars, changing the timezone assumption of a timestamp — is a breaking change even if no client throws an exception.

Second, “without any modifications” means the client’s code, not just the client’s configuration. A change that requires clients to update an SDK version, regenerate type definitions, or modify a configuration file is a breaking change for clients who cannot absorb that update on your timeline.

My team maintains an explicit compatibility contract that defines both dimensions — structural compatibility and behavioral compatibility — and treats violations of either as breaking changes that require versioning protocol.

The Compatibility Classification System

Before any API change ships, my team classifies it against a four-tier system. This classification determines the process required before deployment.

Tier 1: Unconditionally Safe

These changes can ship without any client notification or versioning ceremony:

- Adding new optional fields to response bodies

- Adding new optional request parameters with documented defaults

- Adding new endpoints

- Adding new values to error response

detailsarrays - Relaxing validation constraints — accepting a wider range of input values

- Improving error message clarity without changing error codes

- Adding new webhook event types that clients can ignore

The common property of Tier 1 changes is that clients who do not know about them continue to work exactly as before. A client that ignores unknown fields is unaffected by new fields. A client that does not send the new optional parameter gets the documented default behavior.

Tier 2: Safe With Communication

These changes are technically non-breaking but affect client behavior in ways that require proactive communication:

- Adding new required fields to requests — only safe if the field has a default that produces correct behavior when omitted

- Changing the format of an identifier — from integer to string UUID, for example — even when both formats are accepted during a transition period

- Adding pagination to previously unpaginated endpoints — clients that assume all results are returned in a single response will miss data

- Changing rate limit thresholds — clients operating near the current limits will begin receiving 429 responses

Tier 2 changes ship on a communication timeline, not a versioning timeline. My team sends advance notice to all API consumers, documents the change in the API changelog, and monitors for client errors in the window after deployment.

Tier 3: Breaking With Versioning Path

These changes are breaking for some clients and require a versioning protocol:

- Removing fields from responses

- Renaming fields

- Changing field types

- Removing endpoints

- Changing HTTP status codes for existing conditions

- Tightening validation — rejecting inputs previously accepted

- Changing authentication or authorization requirements

Tier 3 changes ship in a new API version with a migration guide, a deprecation timeline for the old behavior, and active client outreach. The versioning strategy and deprecation protocol govern the process. The old behavior is maintained in parallel until the deprecation window closes.

Tier 4: Never Ship Without Explicit Client Agreement

These changes are breaking for all clients and cannot be handled by standard versioning alone:

- Changing the security model in ways that invalidate existing credentials

- Changing the data ownership model — who owns what data and who can access it

- Changing fundamental behavioral contracts — what an operation actually does to data

- Removing entire API versions before the sunset date

Tier 4 changes require individual client communication, contract amendments for enterprise customers with stability SLAs, and explicit confirmation from affected clients before deployment.

Structural Patterns for Maintaining Compatibility

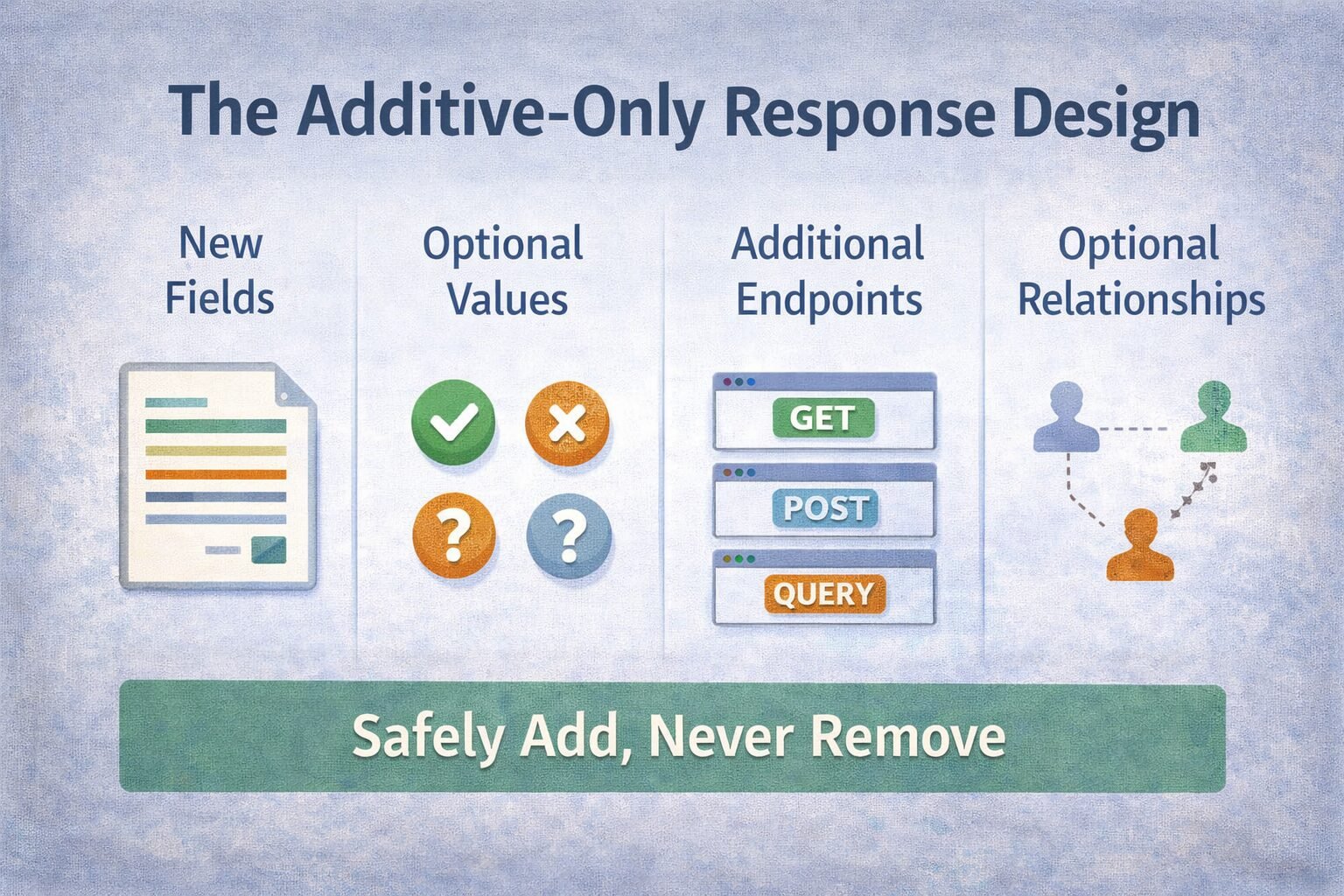

The Additive-Only Response Design

The single most powerful technique for maintaining response compatibility is designing responses to be extended by addition, never by modification. My team designs every response object with the assumption that it will grow over time and that clients must be able to ignore fields they do not recognize.

This requires a corresponding client-side discipline: clients must not fail on unknown fields. A client that deserializes a JSON response into a strict schema and throws an exception on unknown properties is a client that will break on every Tier 1 change. My team documents this requirement explicitly in the API contract — clients must implement tolerant readers that ignore unknown fields — and SDKs are generated with permissive deserialization configured by default.

For request bodies, additive-only is harder to maintain because request validation is server-side. My team’s rule: any new request field must be optional with a sensible default. If a field cannot be optional — if it is genuinely required for correctness — the operation itself has changed semantically enough to warrant a new endpoint or a new API version.

The Expansion Pattern for Enums

Enums are one of the most common sources of backward-compatibility breaks. A client that handles a status field with a switch statement over known values will fail or produce incorrect behavior when a new value is added. This is a Tier 1 change structurally — adding a new enum value is additive — but a behavioral breaking change for clients who have not implemented a default case.

My team addresses this with two practices. First, every enum field in the API contract documents explicitly that new values may be added in the future and that clients must implement a default case for unknown values. This is a client contract requirement, not just advice.

Second, for enums where new values change processing behavior significantly — payment statuses, lifecycle stages, error codes — my team adds new values with advance notice in the Tier 2 communication process. The new value is announced two weeks before it appears in live traffic, giving clients time to implement handling before encountering it in production.

Nullable Field Introduction

When my team needs to add a field that does not have a meaningful value for all existing records, the field is introduced as nullable with explicit documentation of what null means for that field. A new cancelled_at timestamp field is null for orders that have never been cancelled — not absent, not zero, null with documented semantics.

The critical mistake to avoid is introducing a new required non-nullable field. Every existing record in the database must have a valid value for the field before it can be non-nullable in the API response. If existing records genuinely have no meaningful value for the field, the field must be nullable. If you force a non-null default onto existing records, you are changing their semantic meaning without client consent — a behavioral breaking change.

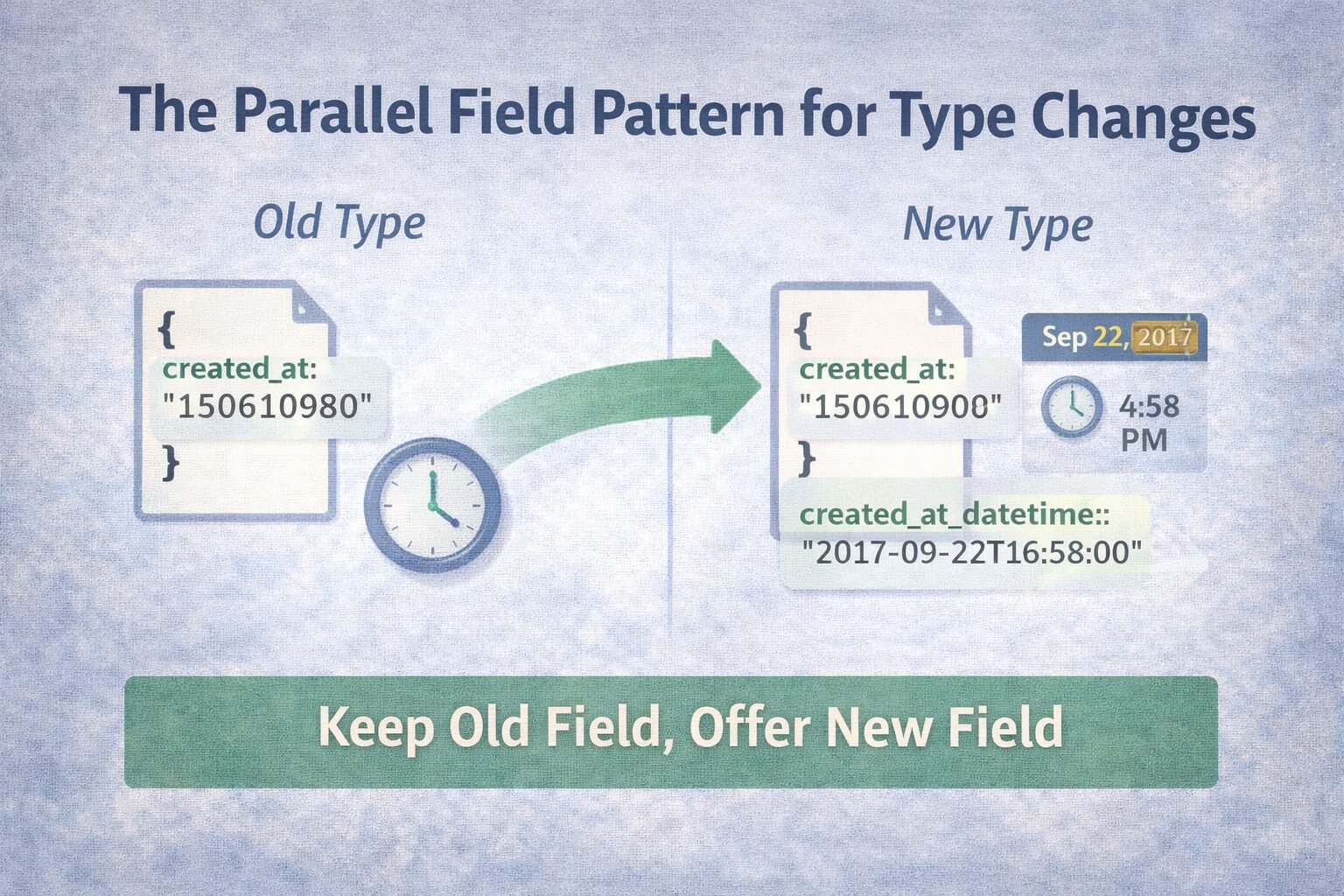

The Parallel Field Pattern for Type Changes

When a field’s type must change — an integer ID that needs to become a string UUID, a flat string that needs to become a structured object — the parallel field pattern allows a backward-compatible transition.

The new field is introduced alongside the old field under a new name. Both fields are populated in responses during the transition period. The old field continues to accept and return the old type. The new field returns the new type. Clients migrate to the new field on their own timeline. The old field is deprecated with a sunset date after sufficient migration has occurred.

My team used this pattern when migrating from integer IDs to prefixed string UUIDs across a platform. The transition ran for six months with both id (integer) and uid (string) present in every response. Migration tracking showed 94% of traffic on the new field before we sunset the old one. The six months felt long at the time — in retrospect, it was the right investment against a change that touched every integration.

This directly informs API naming conventions practice — when a field must be renamed for clarity, the parallel field pattern is the only backward-compatible path. Rename-in-place is a breaking change; parallel-then-deprecate is not.

Behavioral Compatibility: The Harder Problem

Structural compatibility is verifiable with tooling — field presence, types, and HTTP status codes can be checked automatically. Behavioral compatibility requires human judgment and explicit documentation of behavioral contracts.

Documenting Behavioral Contracts

Every API endpoint in my team’s systems has a behavioral contract document that specifies:

- What the operation does to system state

- What conditions produce each response code

- What side effects the operation triggers — emails sent, webhooks fired, downstream services called

- What ordering guarantees exist for concurrent operations

- What consistency guarantees exist — is the response reflecting real-time state or eventually consistent state

This documentation lives in the OpenAPI spec as extended descriptions, not in a separate wiki that drifts from the implementation. Contract-first API development makes behavioral contract documentation a natural part of the design process — the behavior is specified before implementation, not documented after.

When a behavioral contract needs to change, the change is evaluated against the compatibility classification system exactly as a structural change would be. A change to when an order transitions from pending to processing is a breaking behavioral change even though no field names or types change.

Side Effect Stability

Side effects are the most invisible dimension of behavioral compatibility. When a client calls POST /orders, they expect an order to be created and a confirmation email to be sent — because that is what the endpoint did when they built their integration. If the side effects change — a new webhook fires, an additional downstream service is called, the confirmation email format changes — clients who depend on those side effects as part of their workflow break silently.

My team documents all side effects explicitly in the API contract and treats side effect changes as behavioral breaking changes. Adding a new side effect is a Tier 2 change — technically additive but requiring communication. Removing or changing an existing side effect is a Tier 3 change requiring versioning.

For webhook-driven integrations specifically, this means treating the webhook event schema with the same compatibility discipline as the REST API schema. A webhook payload is an API contract. Adding fields is Tier 1. Removing or renaming fields is Tier 3. My team applies the same idempotency design to webhook delivery that it applies to REST endpoints — webhook consumers must handle duplicate delivery, and the webhook contract must be stable enough for consumers to build reliable handlers.

Error Response Compatibility

Error responses are part of the API contract and must maintain backward compatibility with the same discipline as success responses. This is consistently under-prioritized because error paths are tested less thoroughly than happy paths and are often modified without considering client impact.

My team’s error compatibility rules:

Error codes are permanent. Once an error code appears in production responses, it cannot be removed or renamed. It can be deprecated — documented as superseded by a newer code — but must continue to be returned for clients that handle it. The machine-readable code field in the error response envelope is as stable as any other field in the API contract.

Error conditions are documented as behavioral contracts. If POST /payments returns a payment_method_declined error when the card is declined, that behavioral contract is documented and cannot change without versioning. Changing the error code returned for a specific failure condition is a breaking change for clients that switch on error codes.

New error codes are Tier 2 changes. Adding a new error code that previously would have returned a generic validation_failed is technically additive but affects clients who handle validation_failed and expect it to cover all validation scenarios. Advance communication is required. Understanding when an API should return 400 versus 422 versus 500 — and committing to those decisions in the contract — prevents the status code instability that breaks client error handling silently.

Compatibility Testing Infrastructure

Contract Testing in CI

My team runs automated contract tests in CI that verify backward compatibility on every pull request that touches API behavior. The test suite:

Schema regression tests compare the response schema of every endpoint against the last known-good schema. New fields pass. Missing fields fail. Type changes fail. This catches unintentional structural breaking changes — a developer who renames a field in the serializer without realizing it is a breaking change will see a failing test before the PR merges.

Behavioral contract tests verify that documented behavioral contracts hold. If the contract specifies that POST /orders returns 201 on success, a code change that returns 200 fails the test. If the contract specifies that order creation triggers an order.created webhook, a refactor that removes the webhook trigger fails the test.

Consumer contract tests using Pact verify that the implementation satisfies every consumer’s declared dependencies. When a consumer declares that it reads payment.amount and payment.currency from the payments endpoint, the payments service CI validates that both fields are present and typed correctly before deployment.

This infrastructure is the automation layer behind contract-first API development — the contract is enforced continuously, not just at design time.

Compatibility Monitoring in Production

CI tests catch intentional changes. Production monitoring catches unintentional drift — a dependency upgrade that changes serialization behavior, a database schema change that propagates to the API response, a configuration change that alters validation logic.

My team runs a production compatibility monitor that makes scheduled calls to every API endpoint using a set of pinned request fixtures and validates responses against the pinned expected schema. Any deviation from the expected schema triggers an alert. This monitoring runs continuously and catches compatibility drift within minutes of deployment.

Pagination and Compatibility

Pagination strategy changes are among the most disruptive backward-compatibility events in API lifecycle management. A client built on offset pagination that encounters a cursor-only endpoint will either silently miss records or fail with an unrecognized parameter error.

My team treats pagination strategy as a behavioral contract. The pagination model for each collection endpoint is documented in the contract and cannot be changed without versioning. When a collection endpoint needs to migrate from offset to cursor pagination — a necessary migration as datasets scale — the migration follows the same protocol as any Tier 3 change: new version, migration guide, deprecation timeline.

During the migration window, my team supports both pagination models on the same endpoint using the presence of the cursor or page parameter as the discriminator. Clients sending page get offset pagination behavior. Clients sending cursor get cursor pagination behavior. This allows gradual client migration without a hard cutover.

Security Changes and Compatibility

Security changes are the hardest category of backward-compatible design because security improvements sometimes require changes that break existing integrations. My team navigates this tension with a hierarchy: security correctness takes precedence over backward compatibility, but security changes must be executed in ways that minimize unnecessary breakage.

Authentication scheme changes — migrating from API keys to OAuth 2.0, rotating signing keys, changing token formats — are planned as migration events with explicit timelines. Both authentication schemes are supported simultaneously during the migration window. Clients who fail to migrate by the deadline are contacted individually before the old scheme is disabled. Understanding what a JWT is in an API context, the safest way to store JWT tokens, and whether cookies are safer than localStorage all feed into how cleanly an authentication migration can be executed — a migration to a storage model that clients cannot implement easily will have poor adoption regardless of how well the API-side migration is designed.

Permission model changes — adding new required scopes, splitting existing scopes, changing what a scope grants access to — are Tier 3 changes at minimum. Existing tokens with the old scope model must continue to function during the migration window. Tokens issued after the change use the new model. OAuth 2.0 scope design that anticipates scope granularity evolution reduces the frequency of permission model breaking changes — fine-grained scopes from the start are easier to evolve than coarse-grained ones.

Vulnerability patches that require breaking changes ship without the standard deprecation window. Security is the one category where my team does not maintain backward compatibility for the vulnerable behavior. However, the patch is documented as a breaking change with specific migration guidance, and affected clients are notified immediately with the technical detail they need to update their integrations.

Common Mistakes My Team Has Seen

- Removing fields that analytics show as “unused.” API analytics capture what clients send and receive, not what they depend on. A field that appears in 0% of client requests may still be depended on by clients who read it from responses. Query logs are not dependency maps.

- Changing error codes between environments. A staging environment that returns different error codes than production creates integrations that work in testing and break in production. Error contracts must be identical across environments.

- Treating internal APIs as exempt from compatibility discipline. Internal APIs become external APIs through acquisitions, partner integrations, and the natural expansion of “internal” over time. Design every API with compatibility discipline from day one.

- No client inventory. Breaking change impact assessment requires knowing who your clients are. My team maintains a client registry — every integration, every consumer, every SDK installation — to assess the blast radius of proposed changes before they ship.

- Implicit behavioral contracts. Contracts that are not documented are contracts that cannot be maintained. Every behavioral assumption a client might make about your API needs to be either explicitly documented as guaranteed or explicitly documented as not guaranteed.

- Compatibility testing only at the happy path. Error paths, edge cases, and pagination boundaries are where silent breaking changes most commonly hide. Test compatibility across the full behavioral surface, not just the common cases.

- Confusing versioning with compatibility. Versioning is what you do when compatibility cannot be maintained. It is a last resort, not a substitute for compatibility discipline. Teams that version carelessly accumulate version debt that eventually collapses the maintainability of the entire API surface.

When Not to Prioritize Backward Compatibility

Backward compatibility has real costs — maintaining old behavior in parallel with new behavior, running compatibility test suites, tracking client migration progress. These costs are the right investment in specific contexts and are overhead in others.

Pre-launch APIs with no external consumers have no compatibility obligation. Iterate freely until the API surface stabilizes, then lock in compatibility discipline before opening to external clients.

Internal APIs with coordinated deployments where producer and consumer deploy together can accept breaking changes as long as the deployment is coordinated. When both sides are in the same repository or the same deployment pipeline, compatibility is a deployment coordination problem, not an API design problem.

Experimental API surfaces explicitly marked as unstable in the contract — stability: experimental in the spec, X-API-Stability: experimental in response headers — can be changed without the full compatibility protocol. Clients who opt into experimental endpoints accept instability as part of the contract. This is the explicit carve-out that allows innovation without compromising the stability of the mature API surface.

Security-critical changes where maintaining backward compatibility preserves a vulnerability. Security overrides compatibility, but the override must be documented and communicated explicitly.

Enterprise Considerations

Enterprise customers evaluate backward compatibility posture before signing contracts. My team has standardized on a public API stability commitment that enterprise customers receive as a contractual addendum:

The commitment specifies: minimum deprecation notice period (twelve months for Tier 3 changes in the stable API surface), communication channels for breaking change announcements, the stability tier of each API surface (stable, beta, experimental), and the support process for clients who cannot migrate within the standard deprecation window.

This commitment is not a burden — it is a sales asset. Enterprise procurement teams who review the stability commitment alongside the API changelog have concrete evidence that the vendor takes integration stability seriously. My team has been told directly by enterprise customers that the stability commitment documentation was a deciding factor in the purchase decision.

SDK versioning alignment: Enterprise clients using generated SDKs need SDK version compatibility to align with API version compatibility. An API version that is compatible with the previous version must be served by an SDK that is also backward-compatible. My team maintains a compatibility matrix — API version X is compatible with SDK versions Y.Z through Y.W — that enterprise clients reference when planning integration maintenance.

Stability audits: For enterprise clients with long integration cycles, my team offers a pre-integration stability audit. We review the client’s proposed integration against our known change pipeline and flag any endpoints or fields that are planned for modification within the client’s integration horizon. This proactive communication prevents the scenario where a client completes a six-month integration project only to discover that a core endpoint is scheduled for modification in month seven.

Cost & Scalability Implications

The Maintenance Cost of Parallel Behavior

Maintaining backward-compatible behavior in parallel with new behavior has a direct engineering cost. A field that exists in both old and new form — the parallel field pattern — requires both forms to be populated in every response, validated in every request handler, and tested in every test suite. At a small number of fields in transition, this overhead is manageable. At dozens of fields in various stages of the migration lifecycle, the maintenance surface becomes significant.

My team manages this with aggressive migration tracking and sunset discipline. Every compatibility shim has a documented sunset date. Compatibility shims that outlive their sunset date without active client migration become technical debt items in the quarterly engineering roadmap. The migration completion rate — percentage of clients who have migrated off deprecated behavior — is tracked as an operational metric.

Test Suite Scalability

Compatibility test suites grow with API surface and version history. A well-maintained compatibility test suite that covers every endpoint’s schema and behavioral contract can become expensive to run as the API scales to hundreds of endpoints.

My team manages test suite cost with tiered execution. Full compatibility tests run on every pull request to the API layer. A subset of high-risk compatibility tests — covering the most heavily used endpoints and the most recent breaking change migration paths — run on every commit. The full suite runs nightly. This balances CI speed with coverage completeness.

The Cost of Not Investing in Compatibility

The cost calculation that most teams miss is the cost of not investing in backward compatibility. Each breaking change that reaches production generates: support ticket volume from affected clients, engineering time to diagnose and document the break, engineering time to implement a migration path, account management time for enterprise escalations, and reputational cost with the developer community.

My team tracks “compatibility incidents” — production breaking changes that reached clients without proper versioning protocol — as an engineering quality metric alongside error rate and deployment frequency. Reducing compatibility incidents to zero is a achievable goal with the right infrastructure. The investment in compatibility testing, monitoring, and process pays back in reduced support burden within two quarters in our experience.

Implementing This Correctly: A Strategic Perspective

Backward compatibility is a discipline that compounds over time. An API that has maintained compatibility for three years is exponentially more valuable than one that has maintained it for six months — because the trust it has earned translates directly into integration velocity for new clients who evaluate the changelog before building.

My team’s implementation starts with the compatibility classification system and the behavioral contract documentation. These two artifacts — the classification taxonomy and the contract documents — are the governance foundation. Without them, compatibility decisions are made ad hoc by individual engineers who cannot consistently evaluate the blast radius of their changes.

The second layer is the testing infrastructure: schema regression tests, behavioral contract tests, and consumer contract tests in CI. Compatibility that is not tested automatically degrades under feature development pressure. Every engineer on the team should be able to ship API changes confidently because the tests will catch compatibility regressions, not because every engineer has memorized the compatibility contract.

The third layer is the operational monitoring: production compatibility monitors, client migration tracking, and the stability commitment documentation. This layer is what converts technical compatibility discipline into business value — it is the evidence that enterprise customers evaluate, the metric that support teams reference, and the foundation of the developer trust that drives platform adoption.

APIs that maintain backward compatibility are APIs that scale without breaking the ecosystem they have built. Every client integration represents a trust investment. Backward compatibility is how you honor that investment while continuing to evolve. Treat it as a strategic commitment, not an engineering constraint.

Finly Insights Team is a group of software developers, cloud engineers, and technical writers with real hands-on experience in the tech industry. We specialize in cloud computing, cybersecurity, SaaS tools, AI automation, and API development. Every article we publish is thoroughly researched, written, and reviewed by people who have actually worked in these fields.